Portability and reusability are cornerstones of modern software development. Docker Desktop’s robust tools enable developers to containerize any application for deployment on any cloud platform. These portable and reusable applications are ready to deploy wherever a project needs them.

Because Docker is platform and language-agnostic, it complements complex architectures that leverage multi-cloud and hybrid cloud approaches. When we can containerize any language, we can choose the best tools for every microservices writing job. And with the ability to deploy on almost any cloud, organizations are free to leverage multi-cloud to its full potential.

In this article, we’ll discuss how Docker accomplishes containerization and explore a few use cases where we can benefit from containerizing applications.

Containerization Using Docker

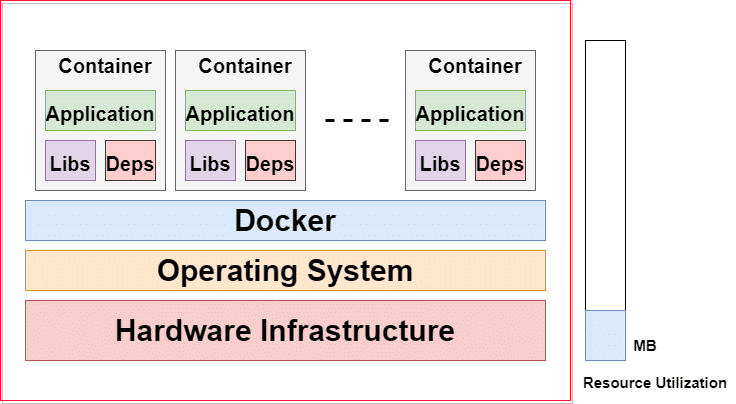

Containerization has gained popularity in software development. It’s the next evolution in virtualization. A container image is a lightweight, standalone, executable software package with everything the application needs to run, like code, libraries, and settings. It separates the application from its environment to ensure that the application works uniformly in any environment.

Containerization uses operating system-based virtualization. Multiple containers can run on the same machine, sharing the underlying host machine’s operating system kernel. The containers run as separate processes, isolated using private namespaces. Containerization allows Docker to package an application with dependencies to work seamlessly in any computing environment.

The underlying hardware infrastructure has the operating system installed on it. We then install Docker on the operating system.

Docker is responsible for bundling an application or function and all its dependencies, libraries, configuration files, and other necessary parts and parameters into a single object: a container. It then manages the container.

The containers virtualize the CPU, memory, storage, and network resources at the operating system level. The self-contained units are compact, making it easy to deploy and run them on any Docker-enabled environment. Docker created the industry standard for containers with portability as a key ingredient.

Docker containers are language and tech-agnostic, so we can run any application on a Docker container, whether Node.js, Java, or .NET. We can use the language and tech stack that best fit our application’s needs.

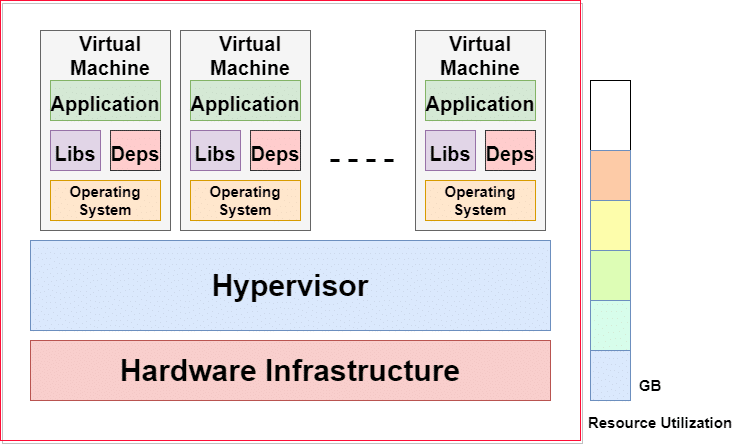

Let’s compare this approach with a more traditional, widely used way of achieving virtualization: virtual machines (VMs).

VMs use hardware-based virtualization, turning the physical machine into multiple virtual machines. A hypervisor makes this approach possible.

The hypervisor runs on top of the hardware, so the host machine supports various guest virtual machines. Each virtual machine contains its own operating system, dependencies, and application.

The VM approach uses more underlying hardware resources because multiple entire operating systems are running. VMs tend to be tens of gigabytes in size, while containers are lightweight — usually megabytes — and boot up in seconds.

Docker isolates the underlying resource to a lesser degree, as the containers share the host machine’s operating system kernel. In contrast, VMs are completely isolated from each other because they do not rely on the underlying operating system kernel. So, each VM can run a different operating system. For example, we can run Windows-based and Linux-based applications on the same hypervisor.

Containers are a considerable improvement over virtual machines, but this isn’t to say they can completely replace them. Both approaches have use cases where one excels, and sometimes they work well together. We can benefit from the advantages of both technologies.

Containerization Use Cases

Docker eliminates the challenges of differences across multiple environments. Let’s examine some use cases where containerization is a beneficial approach.

Testing an Application

Many containerized application versions are readily available on Docker Hub. When we want to test operating systems, databases, and other standard services and tools, we might find running those applications’ Docker images more convenient than installing and setting them up correctly.

We can run the docker run command with the application’s name to do this. For example, to run a MongoDB instance, we use the command docker run mongodb.

We can also run as many instances of the application as we’d like.

Running Microservices

We can deploy microservices-based applications inside VMs. But, this approach has some resource and performance limitations, as a VM runs a complete operating system and takes a while to boot.

Using Docker containers provides the individual microservices with isolated environments. This structure makes these microservices better-suited to applications designed using a microservices architecture. We can deploy and update each microservice separately. This isolation enables us to scale the microservices independently.

Another advantage of redeploying VM applications to Docker containers is a reduction in total cost consumption. Infrastructure-as-a-service (IaaS) costs for workloads running on the public cloud are lower since there are fewer VMs. Workloads running on-premises need fewer servers, reducing the hardware costs.

Taking Advantage of Multi-Cloud

Docker containers offer agility and portability. We can seamlessly move them from one cloud environment to another with minimal or no configuration changes.

This level of portability benefits enables us to test an application on multiple cloud environments. Should the application crash, it only affects the container and doesn’t bring down, say, the entire virtual machine.

Docker containers can run consistently in various infrastructure environments, preventing vendor lock-in. However, be cautious about using proprietary cloud vendor tools.

Working in Multiple Languages

Software projects usually comprise various modular components that communicate with each other. These may be separate components built on entirely different tech stacks. Separate teams may have made the different parts, or a specific tech stack could be the best fit for one element and not another.

For example, a Node.js and a .NET component could persist data on a MySQL database or MongoDB, depending on the data type. We could also use Redis as a caching mechanism and an orchestration tool like Ansible. This system is complicated, and managing and scaling all these components can be challenging. These components may have to run separately using different package managers. We must also replicate this setup in the staging and production environments.

Docker helps overcome this challenge using Docker Compose to manage several containers simultaneously. We can use Docker to containerize all these components and run them on separate containers with their own dependencies. We build the Docker configuration once and use a single command to create and start all the services based on the configurations.

Testing and Deploying Applications

An application’s testing and production environments usually differ. So, when we deploy an application to production, we need to use a different set of configurations and consider some other environmental factors. This difference can cause unanticipated issues.

Instead, we can test applications inside Docker containers and deploy them into production using the same containers. This means we effectively test the applications in the same environment where they’ll run once they’re in production. We don’t need to worry much about the configuration differences between the testing and production environments.

Conclusion

Docker has gained mass popularity because Docker containers provide agility and accelerate application development and innovation. They also help us implement DevOps.

The main advantages of using Docker are speed, portability, scalability, and reduced resource consumption. Docker is also language and environment agnostic, meaning we can containerize any application, regardless of the tech stack, on a local machine or various cloud environments.

Learn more about how Docker containers can help you and your organization make development and DevOps more efficient.

The images were created by the author! Feel free to use/recreate them as you see fit.

If you’re interested in developing expert technical content that performs, let’s have a conversation today.